Publications

Graphics-related research projects that have already been published.

Machine Learning for Graphics

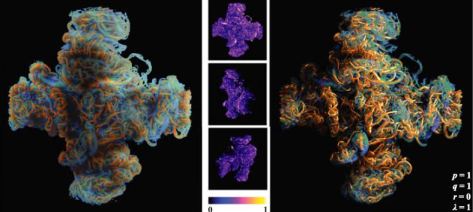

N-Dimensional Gaussians for Fitting of High Dimensional Functions

Recent hybrid and explicitly learned representations exhibit promising performance and quality characteristics. However, their scaling to higher dimensions is challenging, e.g. when accounting for dynamic content or additional parameters such as material properties or illumination. We tackle these challenges for an explicit representations based on Gaussian mixture models. We introduce a high-dimensional culling scheme that efficiently bounds N-D Gaussians, and a loss-adaptive density control scheme that incrementally guides the use of additional capacity towards missing details. Our compact representation is optimized in minutes and rendered in milliseconds.

Stavros Diolatzis, Tobias Zirr, Alexandr Kuznetsov, Georgios Kopanas, Anton Kaplanyan

SIGGRAPH 2024 Conference Proceedings (to appear)

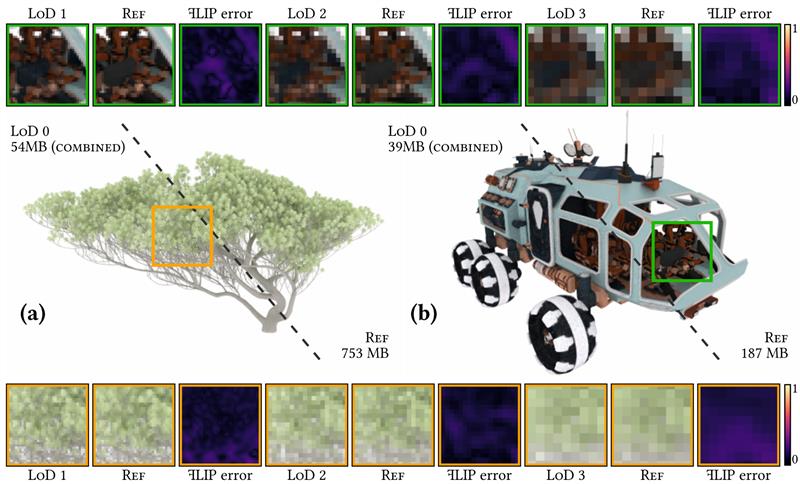

Neural Prefiltering for Correlation-Aware Levels of Detail

We introduce a neural toolset for compressed storage of high-detail geometry and appearance, seamlessly connecting both neural rendering and real-time path tracing in one unified framework.

With their good aggregation capabilities, neural rendering techniques typically fulfill the goal of uniform convergence, uniform computation, and uniform storage across a wide range of visual complexity and scales.

With our neural LoD representation, we achieve compression rates of 70–95% compared to classic source representations, while also improving quality over previous work, at interactive to real-time framerates depending on distances at which the LoD technique is applied.

Philippe Weier, Tobias Zirr, Anton Kaplanyan, Ling-Qi Yan, and Philipp Slusallek

ACM Transactions on Graphics (SIGGRAPH 2023)

Monte Carlo Rendering

Planetary Shadow-Aware Distance Sampling

Dusk and dawn scenes have been difficult for brute-force path tracers when explicitly tracing light transport in the atmosphere. We identify a major source of the inefficiency in samples wasted on the denser lower atmosphere that is in shadow when the star sets below the horizon. We overcome this issue by restricting direct sampling of the star to only unshadowed ray segments, based on boundaries found by intersecting a shadow cylinder fit to the planet for each emitter direction. We retain transmittance sampling by mapping distance boundaries to opacity space, where visible segments can be sampled uniformly. We achieve significant convergence speedups compared to brute-force results of similar quality.

Carl Breyer and Tobias Zirr

Eurographics Proceedings 2022

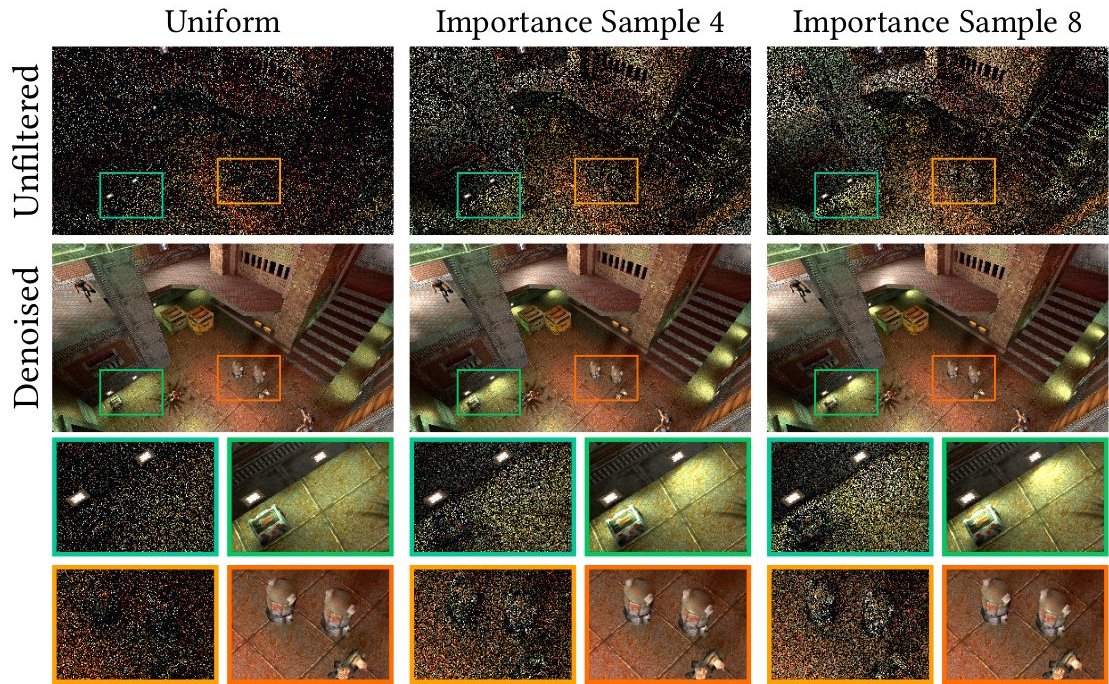

Light Sampling in Quake 2 Using Subset Importance Sampling

In the context of path tracing, to compute high-quality lighting fast, good stochastic light sampling is just as important as light culling is in the context of raster graphics. In order to enable path tracing in Quake 2, multiple solutions were evaluated. Here, we describe the light sampling solution that ended up in the public release. We detail potential extensions, allowing a pseudo-marginal form of MIS, and why these did not end up in the final release. We also discuss more advanced alternatives to our approach, to this end we provide a short overview of applicable recent techniques like ReSTIR, real-time path guiding, and stochastic lightcuts.

Ray Tracing Gems II pp 765–790

Path Differential-Informed Stratified MCMC and Adaptive Forward Path Sampling

We address MCMC practicability issues, opening up path space MCMC as a more general adaptive framework. To counter non-uniform image quality we derive an analytic target function for image-space sample stratification. It is based on a novel connection between variance and path differentials, allowing analytic variance estimates for MC samples (with potential uses in adaptive algorithms outside MCMC). We also apply our theoretical framework to optimize an adaptive MCMC algorithm that only uses forward path construction, in contrast to many previous MCMC techniques that rely on bi-directional path tracing. Notably, we adapt a full-featured path tracer (with minimal changes) into a single-path state space Markov Chain, bridging another gap between MCMC and MC.

ACM Transactions on Graphics, 39, 6, Article 246

Reweighting Firefly Samples for Improved Finite-Sample Monte Carlo Estimates

Bright samples with low sampling probability, often called fireflies, occur in all practical Monte Carlo renderers and are part of computing unbiased estimates. For finite-sample estimates, however, they can lead to excessive variance. Rejecting all such samples as outliers, as suggested in previous work, leads to estimates that are overly biased and can cause undesirable artifacts. In this paper, we show how samples can be reweighted depending on their contribution and sampling frequency, such that the finite-sample estimate can in fact get closer to the correct expected value, and the overall image noise (variance) can be controlled.

Computer Graphics Forum (2018)

Line Integration for Rendering Heterogeneous Emissive Volumes

Without special handling, rendering emissive media is challenging: in thin regions where only few scattering events occur, emission is poorly sampled. Importance sampling by emission can be disadvantageous, too, neglecting absorption in dense regions.

In order to be able to use all emission events we encounter along line segments inside volumes, we extend the standard path space measurement contribution such that it allows collecting all emission along randomly sampled path segments, rather than just at path vertices, while retaining unbiasedness.

Florian Simon, Johannes Hanika, Tobias Zirr, and Carsten Dachsbacher

Computer Graphics Forum (Proc. of EGSR 2017)

Visualization

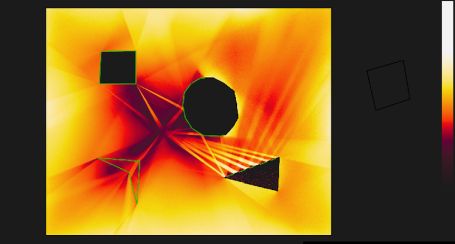

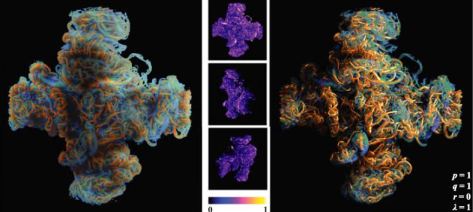

Visualization of Coherent Structures of Light Transport

Inspired by vector field topology, an established tool for the extraction and identification of important features of flows and vector fields, we develop means for the analysis of the structure of light transport. We derive an analogy to vector field topology that defines coherent structures in light transport. We introduce Finite-Time Path Deflection (FTPD), a scalar quantity that represents the deflection characteristic of all light transport paths passing through a given point in space. For virtual scenes, the FTPD can be computed directly using path-space Monte Carlo integration. We show that the coherent regions visualized by the FTPD are closely related to the coherent regions in our new topologically-motivated analysis of light transport. FTPD visualizations are thus also visualizations of the structure of light transport.

Tobias Zirr and Carsten Dachsbacher

Computer Graphics Forum (Proc. of EuroVis 2015)

Extinction-Optimized Volume Illumination

Visualization can benefit from advanced rendering techniques also used for photo-realistic image synthesis. However, high contrast, shadows, and occlusion can limit the usefulness of such visualizations. We present a method to optimize the attenuation of light for visualization purposes.

By an importance function, more light can be transmitted to and from the features of interest, improving visibility of important features, while contextual structures still cast shadows giving cues for the perception of depth.

Our work is inspired by previous visibility optimization work for surfaces, but significantly improves the efficiency of the optimization, which is crucial for making it scale to volumetric data sets: By converting the smoothing terms used in previous work into a separable pre-filtering step on the input data, we manage to find a closed-form solution for the optimal extinction terms along a view or shadow ray, thus achieving interactive performance.

Marco Ament, Tobias Zirr, and Carsten Dachsbacher

IEEE Transactions on Visualization and Computer Graphics, July, 2017

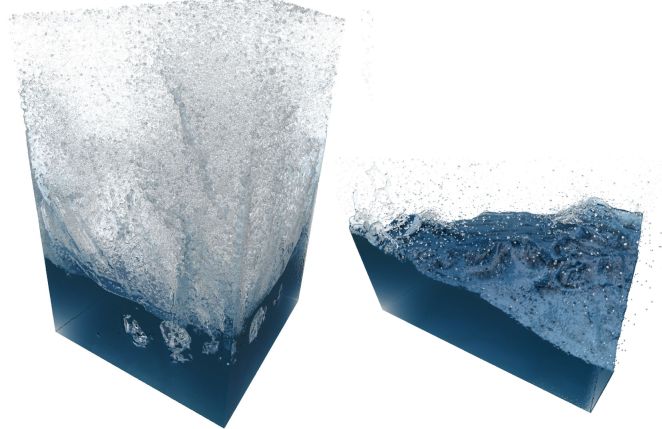

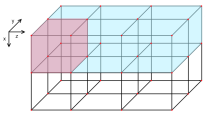

Memory-Efficient On-The-Fly Voxelization of Particle Data

We present a GPU-friendly real-time voxelization technique for rendering homogeneous media that is defined by particles, e.g. fluids obtained from particle-based simulations such as Smoothed Particle Hydrodynamics (SPH). Our method computes view-adaptive binary voxelizations with on-the-fly compression of a tiled perspective voxel grid, achieving higher resolutions than previous approaches. It allows interactive rendering with complex effects such as ray casting-based refraction and reflection, light scattering and absorption, and ambient occlusion. In contrast to previous methods, it does not rely on expensive preprocessing.

Tobias Zirr and Carsten Dachsbacher

IEEE Transactions on Visualization and Computer Graphics, May, 2016

Real-time Rendering

Real-time Rendering of Procedural Multiscale Materials

We present a stable shading method and a procedural shading model that enables real-time rendering of sub-pixel glints and anisotropic microdetails resulting from irregular microscopic surface structure, in order to simulate a rich spectrum of appearances ranging from sparkling to brushed materials. We introduce a biscale Normal Distribution Function (NDF) for microdetails to provide a convenient artistic control over both the global appearance as well as over the appearance of the individual microdetail shapes, while efficiently generating procedural details.

Tobias Zirr and Anton Kaplanyan

Proc. of i3D 2016

Distortion-free Displacement Mapping

Displacement mapping a textured surface introduces distortions of the displaced surface's texture. Our approach corrects this by counter-distorting the other texture maps according to the displacement map. We describe a fast and simple, fully GPU-based two-step procedure to resolve this problem. First, a correction deformation is computed from the displacement map. Second, we apply the correction deformation to the texture coordinates used for surface texture lookups, counteracting the uneven distortion due to displacement mapping.

Tobias Zirr and Tobias Ritschel

Computer Graphics Forum (Proc. of HPG 2019)

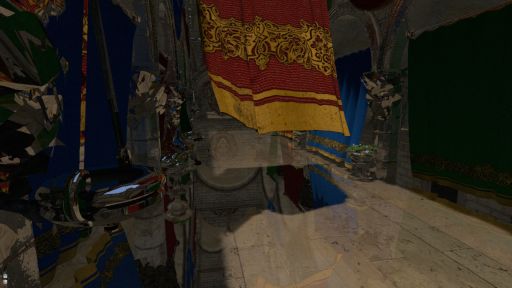

Object-order Ray Tracing for fully Dynamic Scenes

Ray tracing poses specific challenges on parallel architectures such as GPUs: Each ray may hit different objects with differing materials and textures, requiring potentially divergent shading calculations and random access to larger parts of the scene description. In contrast, traditional GPU rasterization pipelines require only linear scene access and trivially support fully dynamic scene geometry. In this work, we explored an object-order method for tracing incoherent secondary rays that has similar properties and flexibility, implemented in the standard graphics pipeline. Thus, the ability to generate, transform and animate geometry via shaders is fully retained. Our method does not distinguish between static and dynamic geometry.

GPU Pro 5

External blog posts

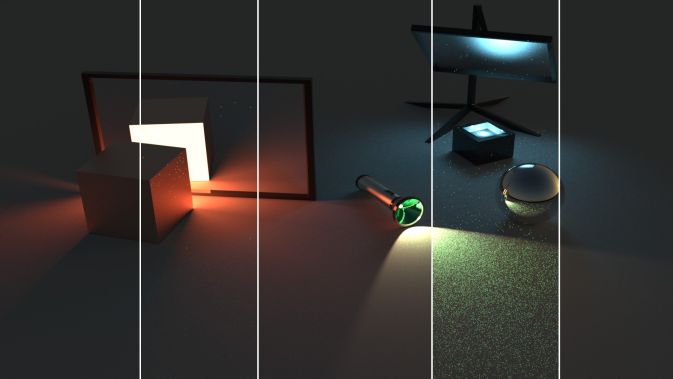

Realism by scalable tracing of light travelling through neural representations

Path tracing is an elegant algorithm that can simulate many of the complex ways that light travels and scatters in virtual scenes. With classic computer graphics representations, it uses ray tracing to determine the visibility in-between scattering events. Integrating neural objects into a rendered scene requires efficient equivalent operations which determine the space occupied by their neural representations.

Our work builds a bridge between fully neural and fully classic representations, used in a single unified path tracer. We provide one example of a classically hard problem that is solved elegantly by neural optimization tools.

As the software and hardware ecosystem matures, and as the development of neural solutions for real-time graphics and games becomes less of a challenge with better integration into existing renderers, game engines, and creation workflows, we expect more exciting solutions to be built.

Intel developer blog

Path-traced Quake 2 Overview and Introductory Materials

Q2VKPT was created by Christoph Schied as the first playable game that is entirely raytraced and efficiently simulates fully dynamic lighting in real-time, with the same modern techniques as used in the movie industry.

I mainly contributed emitter sampling code and a project website that provides an introductory overview with answers to the most common questions.

The purpose of this project was to find out exactly what's still missing for a clearer pathway into a raytraced future of game graphics. It was later continued in the form of Quake 2 RTX, with many re-appearances in benchmarks as the field kept evolving and more path-traced game titles started to emerge.

Q2VKPT project website

Articles on the archived blog

Rendering Ice in Liquidiced

Step-by-step breakdown of the procedural multi-scale glints and shading done in the ice/snow shaders of the 64k demo ``liquidiced".

Three not-so-cute Anti-Aliasing Tricks

A few workarounds for fixing post-processing effects in graphics pipelines without direct access to the subpixel samples of anti-aliased render targets.

Simplistic Marching Cubes Optimization

Some optimizations to naive Marching Cubes mesh generation in order to efficiently obtain a compact, indexed mesh that avoids redundant evaluation of procedural generator functions.